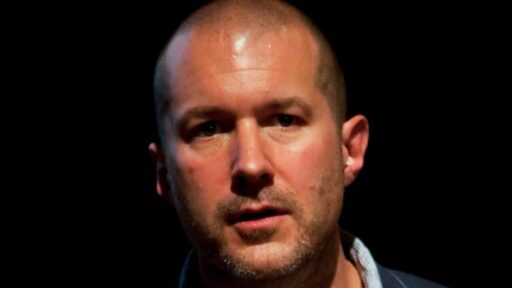

OpenAI has confirmed the development of its first hardware prototype in collaboration with former Apple designer Jony Ive, marking a significant step in their joint AI hardware venture. Jony Ive and OpenAI CEO Sam Altman revealed this prototype during an interview conducted by Laurene Powell Jobs, emphasizing its screen-free design intended to redefine how people interact with artificial intelligence.

Background on the OpenAI-Jony Ive Partnership

The collaboration between OpenAI and Jony Ive grew from exploratory conversations about what a native AI device should look like into a formal design program led by Ive’s firm, LoveFrom. According to reporting that details how LoveFrom moved from early concept work to building physical objects, the company now has a prototype for what is described as the first piece of OpenAI hardware, underscoring that the partnership has shifted from brainstorming to industrial design and engineering in a relatively short span of time. For OpenAI, bringing in the designer behind the original iMac and iPhone signals an ambition to shape not only software models but also the physical form of everyday AI access.

Sam Altman’s role as OpenAI CEO has been central in driving the project from idea to prototype, and he has now publicly confirmed alongside Ive that the device exists and is being actively developed. In coverage of their joint appearance, Altman is described as treating dedicated hardware as a natural extension of OpenAI’s model roadmap, positioning the prototype as a way to embed AI more seamlessly into daily routines rather than confining it to phones and laptops. That framing matters for investors, developers, and competitors, because it suggests OpenAI intends to compete directly in consumer hardware rather than relying solely on partners’ devices.

Confirmation of the Prototype Milestone

OpenAI’s confirmation that it has a first hardware prototype built with Jony Ive arrived on November 25, 2025, turning months of speculation into a concrete milestone. Reporting on that announcement states that OpenAI has now acknowledged a physical device developed with LoveFrom, describing it as the company’s first internally defined hardware product and tying it explicitly to the broader AI hardware venture that Altman and Ive have been quietly assembling. By moving from rumor to official confirmation, OpenAI is signaling to the market that its hardware plans are no longer experimental side projects but part of its core strategy for how people will access its models in the future, a shift detailed in coverage from TechSpot on OpenAI confirming its first hardware prototype built with Jony Ive.

Separate reporting on November 24, 2025, describes the device as the first piece of OpenAI hardware developed by LoveFrom that has formally reached prototype status, indicating that the design studio has moved beyond sketches and mockups into testable units. That account notes that LoveFrom is not just consulting on aesthetics but is directly responsible for the industrial design of the hardware, which reinforces the idea that Ive’s team is deeply embedded in the product’s creation rather than acting as a distant advisor. The fact that this milestone is being reported across multiple outlets gives hardware partners, component suppliers, and software developers a clearer signal that they should begin planning for an ecosystem in which OpenAI-branded devices sit alongside smartphones, laptops, and smart speakers.

Key Features of the Screen-Free Device

Jony Ive and Sam Altman have both emphasized that the core attribute of the new device is its screen-free or screenless design, which they present as a deliberate break from the dominant model of AI access through glowing rectangles. In an interview covered by Mashable’s report on ex Apple designer Ive and OpenAI CEO Sam Altman confirming a prototype of their secret AI screen-free device, the pair confirmed that the hardware is being built around non-visual interaction, with AI meant to fade into the background rather than demand constant visual attention. That approach could have wide implications for how people manage focus, privacy, and accessibility, since a screenless device might encourage more ambient, voice-first or sensor-driven experiences instead of the tap-and-swipe behavior that dominates smartphones.

Coverage of the project repeatedly describes the prototype as a mysterious piece of AI hardware, with executives offering only high-level hints about its innovative, non-visual interface rather than detailed specifications. A report on the interview conducted by Laurene Powell Jobs notes that Ive and Altman framed the device as an attempt to rethink how AI should manifest in the physical world, suggesting that the interface may rely on subtle cues, audio, or other modalities instead of a conventional display, a characterization echoed in 9to5Mac’s account of Laurene Powell Jobs interviewing Jony Ive and Sam Altman about mysterious AI hardware. For consumers and regulators, that mystery cuts both ways, generating excitement about a potentially less intrusive form of computing while also raising questions about how such a device will handle data collection, consent, and transparency without the familiar visual prompts of a screen.

Timeline and Future Launch Plans

OpenAI executives are already sketching out a timeline for the device, with reporting that the company is eyeing a two-year launch window from the November 24, 2025, revelation that prototypes exist. One detailed account of the company’s hardware roadmap says OpenAI has revealed its first hardware prototypes and is targeting a roughly two-year path to market, a plan that would place the launch in the mid to late 2027 timeframe if the schedule holds, according to TechBuzz’s report that OpenAI reveals first hardware prototypes and eyes a 2-year launch. That kind of explicit horizon is significant for component makers, retail partners, and software developers, who typically need long lead times to align manufacturing capacity, distribution channels, and app ecosystems around a new category of device.

Executives have also indicated that they plan to reveal the device in two years or less, which several reports frame as an acceleration from earlier, less concrete rumors about the project’s timing. A detailed piece on the hardware effort notes that company leaders now say they want to show the device publicly within that two-year window, a stance that suggests internal confidence in the maturity of the design and engineering work and is highlighted in CNBC’s coverage of execs saying OpenAI has first hardware prototypes and plans to reveal the device in 2 years or less. For rivals in the AI and consumer electronics markets, that timeline effectively starts a countdown, giving companies like Apple, Google, and Amazon a clearer sense of when they might face a new kind of AI-native hardware competitor and prompting them to reassess their own device roadmaps.

Role of Laurene Powell Jobs and Broader Stakeholder Interest

The public confirmation of the prototype came during an interview conducted by Laurene Powell Jobs, whose involvement through her organization Emerson Collective signals that the project is drawing attention from influential figures beyond the traditional tech and venture capital circles. Reports on the event describe Powell Jobs as using the conversation to probe how Ive and Altman think about the social and cultural implications of embedding AI into a dedicated device, rather than focusing solely on technical specifications. That framing matters because it situates the hardware not just as a gadget but as a potential touchpoint for debates about education, labor, and information access, areas where Emerson Collective has been active.

Coverage of the partnership also notes that LoveFrom’s work on the prototype is being watched closely by design and Apple-focused observers, who see the project as a test of how Ive’s post-Apple studio will shape the next generation of consumer technology. A report detailing that LoveFrom has a prototype for the first piece of OpenAI hardware explains that the studio’s involvement has already influenced expectations about build quality, materials, and the overall feel of the device, as described in AppleInsider’s coverage that LoveFrom has a prototype for the first piece of OpenAI hardware. For designers, policy advocates, and educators, the fact that such a high-profile design firm is working hand in hand with an AI research company, under the public eye of figures like Laurene Powell Jobs, suggests that the conversation about AI hardware will be as much about values and human experience as it is about processing power or model size.

Market and Ecosystem Implications of a Screenless AI Device

Reporting on the project’s trajectory indicates that OpenAI and Jony Ive’s screenless AI device could release in the next two years, a window that aligns with the company’s broader prototype and launch timeline. One account of the hardware effort states that the screenless device has reached the prototype stage and could arrive within that two-year period, framing it as a potential new category of AI-first hardware that sits somewhere between a smart speaker and a wearable, as outlined in Hypebeast’s report that OpenAI and Jony Ive’s screenless AI device could release in the next two years. For app developers and service providers, that prospect raises practical questions about how to design experiences for a device that may not offer a traditional display, pushing them to think in terms of conversational flows, audio cues, and context-aware automation rather than visual interfaces.

At the same time, the confirmation of a working prototype, the explicit two-year launch target, and the involvement of high-profile figures like Jony Ive, Sam Altman, and Laurene Powell Jobs collectively signal that AI-native hardware is moving from speculative concept to near-term reality. As more details emerge from executives about the device’s non-visual interface and its role in OpenAI’s ecosystem, stakeholders across consumer electronics, software, and policy will need to grapple with what it means for AI to be embodied in a dedicated, screen-free object that could sit in homes, offices, or classrooms. The stakes are high because whichever company defines the dominant pattern for AI hardware interaction may shape not only how people talk to machines, but also how they expect those machines to listen, respond, and fit into the rhythms of everyday life.